Veteran-Owned

Veteran-Owned

Your team has AI tools.

They're not sure what to do with them.

That's not a training problem. It's a translation problem. Someone needs to sit with your people, learn what they actually do all day, and show them exactly where these tools fit. Not in theory. In their workflows, with their data, on their deadlines.

That's what we do.

after 30 days

for AI training adoption

since May 2025

Most AI training looks the same. Someone shows your team a bunch of demos, everyone nods, and within a week nobody's changed how they work. The tools sit there. The licenses keep billing. The team goes back to what they were doing before.

It's not because the tools are bad. It's because nobody connected them to the actual work. The gap between "look what ChatGPT can do" and "here's how this saves you two hours on Thursday" is where most training falls apart.

We learn your workflows first

Before we train anyone, we spend time understanding what your team actually does. What tools they use, where time disappears, what's tedious, what's high-stakes. This is the part most vendors skip.

We train on what the audit uncovered

No generic curriculum. Every session is built around the specific opportunities we found. Your team works with their own tasks, their own data, in real time. They leave with workflows they can use the next morning.

We build what makes sense to automate

Some things should be automated. Most shouldn't. We help you tell the difference, then build the agents and workflows that actually earn back the investment. You get agent charters and a 30-day action plan.

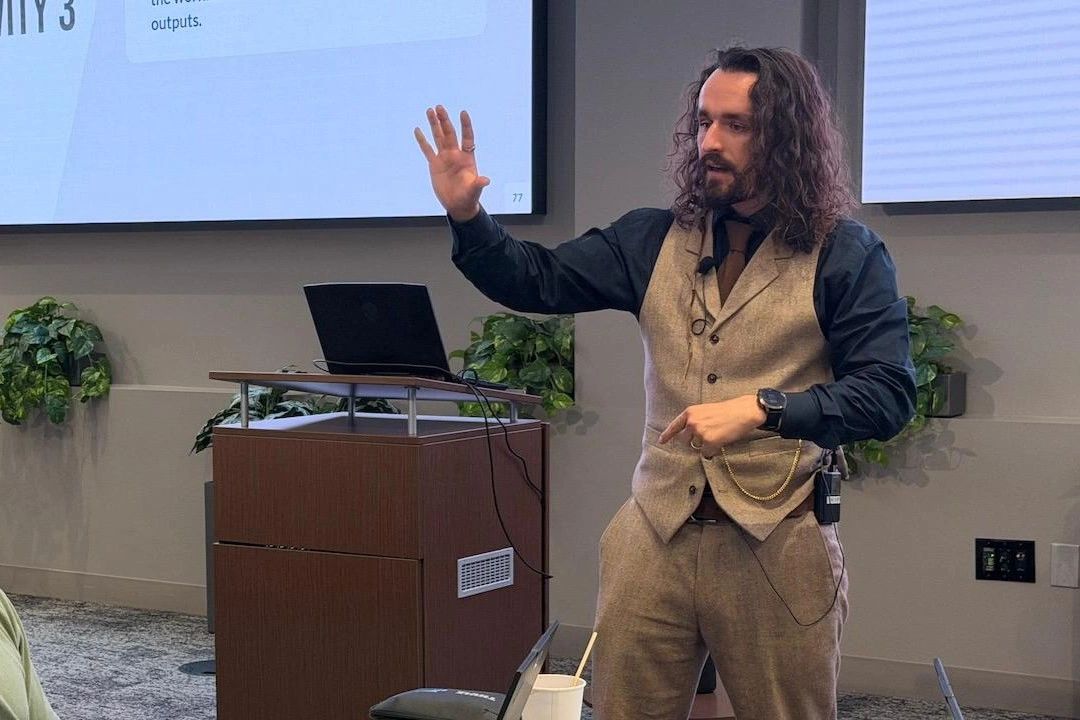

Jake Van Clief

I spent eight years in the Marine Corps working on cryptographic systems and fighter jet avionics, where "close enough" isn't a concept that exists. That turned into a master's degree in governance and AI policy at the University of Edinburgh, which turned into this: helping organizations figure out what to actually do with AI, not just what it can theoretically do.

The thing I've gotten good at is taking the biggest AI skeptics in a room and making their criticisms the most valuable part of the conversation. Healthy skepticism is an asset. We just need to point it at the right questions.

David McDermott

David brings over a decade of software engineering experience across defense, gaming, and enterprise systems. He holds an MS in Computer Science from the University of Iowa and has built production systems at BAE Systems, Epic Games, and Derivco.

He architects Eduba's technical infrastructure, including EdubaWare and the agent routing systems that underpin the training platform. David is the person who makes sure the things we teach people to build actually work at scale.

If your team has AI tools they're barely using,

that's the conversation I like having.

No pitch deck. No pressure. Just a conversation about what your team does and whether we can help.

The work speaks

for itself.

From Fortune 500 training floors to academic research labs, these are the engagements that built our reputation. Not because we told people what AI can do, but because we showed them what to do with it.

What started as a training engagement has become something more like embedded consulting. Hundreds of employees across both organizations, from frontline teams all the way to executive leadership, trained through single and multi-day sessions built entirely around their existing workflows.

The sessions didn't just teach people how to use AI tools. They uncovered where automation actually made sense, and where it didn't. Out of the coursework itself, teams began building agents that are projected to save 2,000 to 3,000 hours annually. But the bigger outcome was what nobody planned for: new value-adds beyond just automation, places where AI could improve decision quality, not just speed.

and counting

annually from course-built agents

after 30 days

Forty-plus senior executives needed something that most AI training doesn't offer: an honest conversation about when to invest in AI and when the answer is no. The engagement was built around strategic decision-making, not tool demos. We focused on helping leadership develop the judgment to evaluate AI investments against real operational needs, cutting through vendor narratives to get to what actually matters.

should the answer be no?

An AI ethics and governance briefing for ambassadors and international affairs experts, focused on how AI tools are reshaping soft power and influence measurement across 25+ countries. The workshop brought together researchers from the British Council, the British Foreign Policy Group, Ben Gurion University, and other institutions to discuss what happens when the tools we use to measure influence start changing the influence itself.

Presented the Ethics Engine research alongside ongoing work with ICR and the British Council on international soft power, connecting the psychometric evaluation of AI models to the broader question of how governments and institutions should think about the tools they're adopting.

Training delivered to IAG's innovation division, helping one of the world's largest airline groups think through where AI fits within development, thought leadership, and customer experience operations. The kind of environment where getting it wrong isn't just expensive, it's felt by millions of passengers.

“A pleasure working with Jake and his team at Eduba.”

Working with Armetour's defense and intelligence teams to integrate AI capabilities into operational workflows. This engagement draws on the same zero-defect thinking that comes from working on systems where failure isn't an abstraction. When the stakes are this high, computational orchestration matters: knowing which layer each problem belongs on, whether that's AI, traditional code, human judgment, or a decision not to build it at all.

VigilOre is the system that came out of this work: a multi-agent compliance platform for DRC mining operations. Four specialized agents handle document intake, aggregation, comparison, and reporting across multilingual regulatory frameworks. The entire product, from architecture to the demo video above, was designed and produced by Eduba.

work per cycle, before

documents now

“Always ahead of the competition and ready to deliver and exceed expectations.”

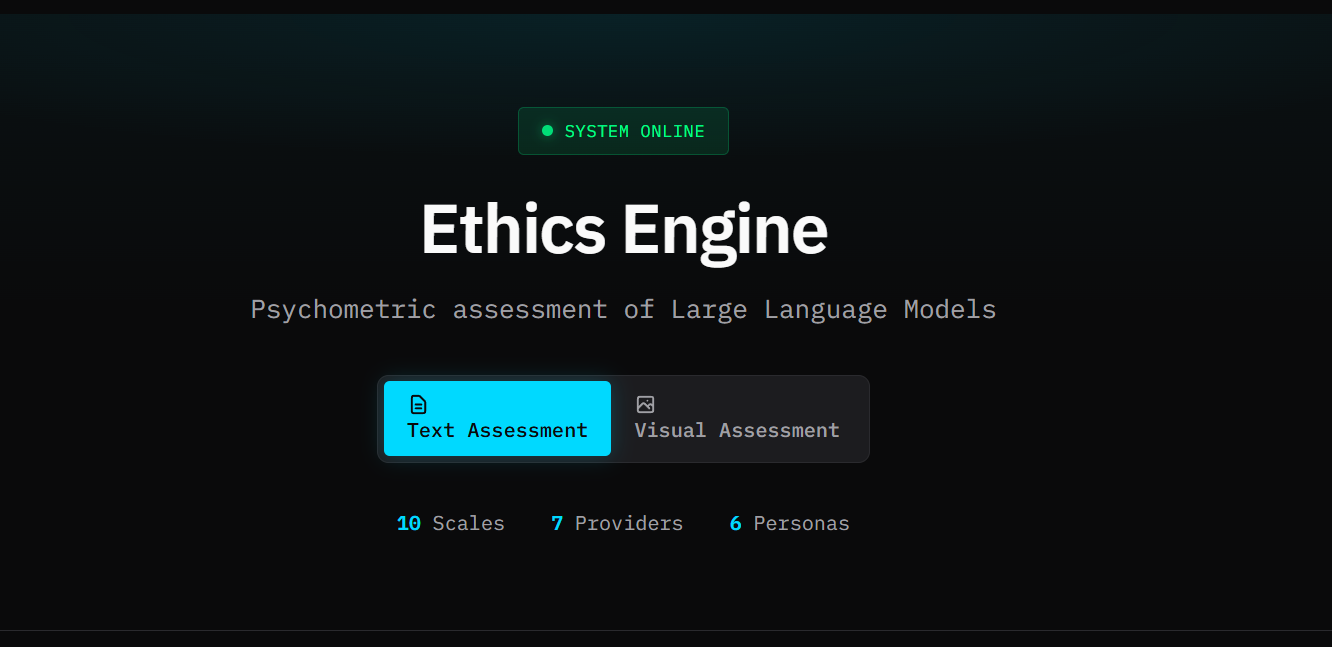

Most organizations choose AI tools based on marketing. We built a methodology to evaluate them using validated psychological instruments, the same psychometric frameworks applied to humans, now applied at scale to large language models. The Ethics Engine gives organizations something they've never had before: an empirical, repeatable way to assess the behavioral tendencies of the models they're trusting with their work.

Being able to evaluate these models at all was the hard part. Doing it at scale is what makes it useful. This research was also presented at UKICER 2025 under "AI Workflows: Honest, Transparent, Reusable Work" and formed the basis of the AI governance briefing at the Academy of International Affairs NRW.

Applying machine learning and NLP to map how ideas transform as they cross borders, for British Council-commissioned research on international soft power. The work uses AI to do what would take a research team months: trace influence patterns across languages, institutions, and policy frameworks.

The engagement went well enough that ICR made a decision they hadn't made in over a decade: they wanted to produce a hard copy of the study. That's not a metric you find on a dashboard, but it tells you something about the quality of the work. When a research organization breaks a ten-year pattern to put your findings in print, the work did its job.

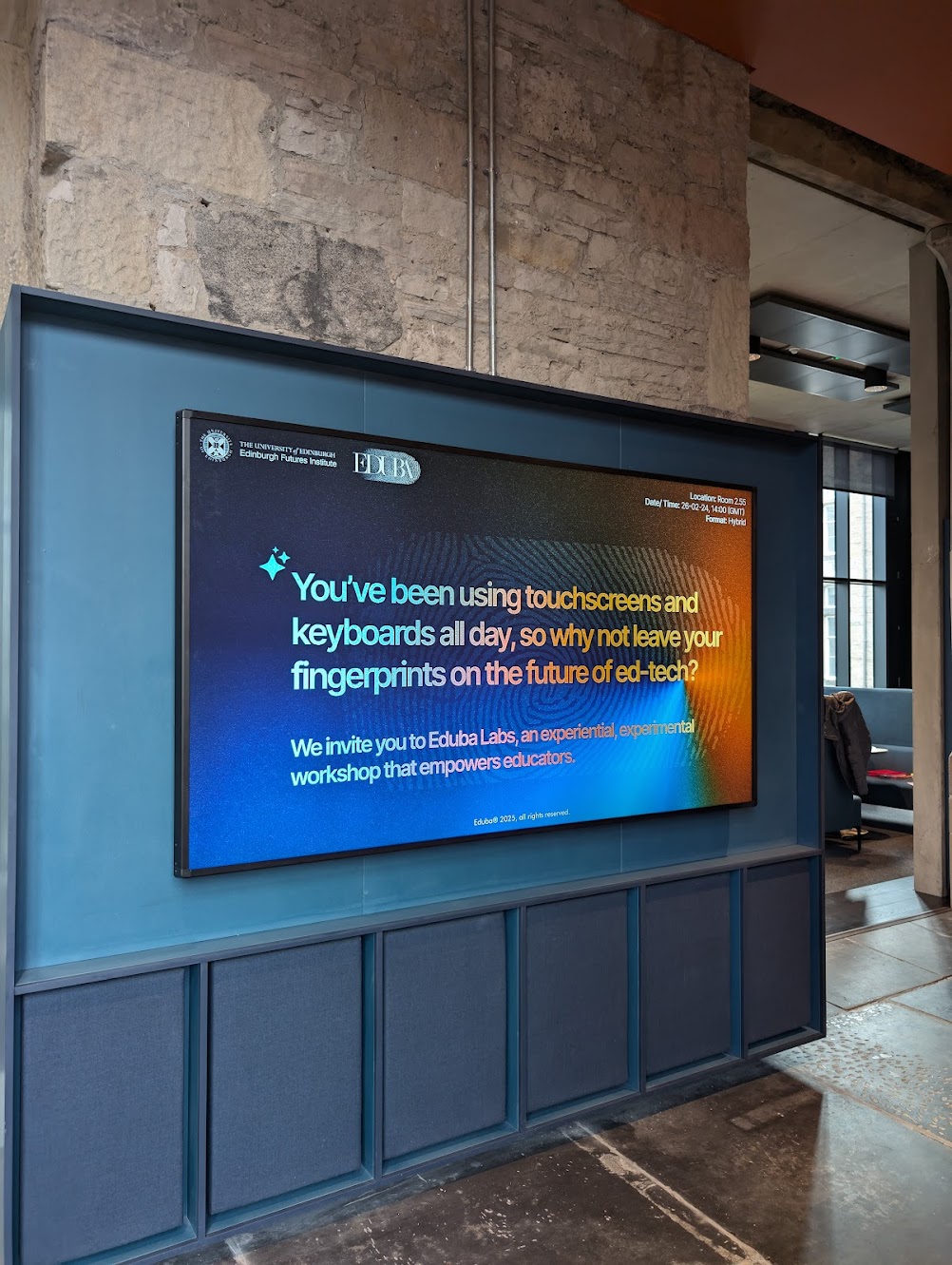

Before the Fortune 500 engagements, before the global consultancies, the work started here. After using AI to produce research that was accepted to Flagler's Saints Academic Review, faculty started asking how it was done. That turned into contracted AI workshops for the college, teaching professors how to think about these tools in the classroom.

It was the first time the tables turned from student to teacher, and it proved something that still holds: the people most skeptical of AI often become its most thoughtful adopters, if someone takes the time to connect it to their actual work. The work continued at the University of Edinburgh, where Eduba Labs ran experimental workshops at the Edinburgh Futures Institute for faculty exploring AI in education.

“Whoa, this is a really good use of AI. How do you do this?”

Your team could be the next one

that actually uses what they learned.

No pitch deck. No pressure. Just a conversation about what your team does and whether we can help.